AI and Disinformation in Social Media: Risks & Opportunities for SMBs

What you see is no longer what actually happened. In February 2026, AI-generated videos, images and texts on social media have become so convincing that even experienced journalists can’t always distinguish them from authentic content. Deepfakes influence elections, synthetic bot networks steer public debates, and “decline porn” — AI-generated images of urban decay — drives millions of people into fear and political radicalization. At the same time, AI also offers opportunities: better content moderation, creative tools and smarter fact-checking. This article shows what’s happening right now, who’s behind it and what businesses and individuals can do about it.

What Are Deepfakes — and Why Are They So Dangerous in 2026?

Deepfakes are synthetic media — videos, images or audio files — created using artificial intelligence that convincingly replicate real people or events. What was still recognizably amateurish just a few years ago has reached a level in 2026 that surpasses professional-grade production: voices are cloned in real time, faces are inserted into existing videos, and entire scenarios are generated from scratch — complete with natural lip movements, authentic lighting and coherent background sounds.

The problem isn’t the technology itself — it’s its accessibility. Tools like OpenAI’s Sora, xAI’s Grok and numerous open-source models allow virtually anyone to create hyperrealistic synthetic media. Adam Mosseri, head of Instagram, summed up the situation: “Authenticity is becoming infinitely reproducible.”

AI Bots and Synthetic Content: The Invisible Army

Deepfakes are only the most visible tip of a much larger problem. Beneath the surface, AI-powered bot networks coordinate to spread disinformation. These bots are no longer crude spam accounts — they conduct human-like conversations, respond contextually to current events and build credible profiles over months.

A particularly insidious variant involves pro-Russian AI-generated propaganda videos circulating on social media platforms. MIT Technology Review reported in February 2026 that comments on such videos pointing out AI generation received significantly less engagement than comments treating the content as real. The platforms’ algorithms effectively reward the spread of disinformation. Even more concerning: growing evidence shows that people are influenced by AI-generated content “even when they know that it is false.”

According to a study cited by The Verge in February 2026, AI-generated content already accounted for 10 percent of YouTube’s fastest-growing channels in July 2024. That share has likely increased substantially since then. The platforms profit from this: more content means more engagement, more engagement means more ad revenue. Ben Colman, CEO of Reality Defender, a deepfake detection company, told The Verge: “Platforms have wholeheartedly embraced deepfakes and AI slop […] it’s yet another kind of content to keep users on the platform longer and push more ads.”

“Decline Porn”: How AI-Generated Decay Images Deliberately Deceive Older Generations

A particularly alarming phenomenon was documented by BBC journalist Marianna Spring in February 2026: so-called “decline porn.” These are AI-generated videos depicting British city centers as neglected, crime-ridden wastelands — even though the scenes shown never actually occurred.

The creator of these videos, operating under the pseudonym “RadialB,” openly admitted in an interview that he deliberately targets an older demographic: people between 50 and 60 who didn’t grow up with AI and can’t recognize the generated images as synthetic. The result is visible in the comment sections of these videos — Spring described seeing “50-year-olds and 60-year-olds in the comments raging and saying all this political stuff.” People forming political opinions based on AI-generated fabrications.

TikTok user C.Tino, who exposed these videos as AI-generated, warned: “These videos are making people think this is real life. It’s becoming out of hand now.”

The real-world impact is measurable: YouGov data shows that a majority of Britons believe London is unsafe — even though 81 percent of Londoners themselves say their own neighborhood is safe. AI-generated decay imagery reinforces a distorted perception of reality and drives political radicalization. Elon Musk himself shared such narratives, commenting: “What I see happening is a destruction of Britain. Initially a slow erosion, but a rapidly increasing erosion of Britain with massive uncontrolled migration.” — a statement visually “supported” by AI-generated images of alleged decay that never existed.

Impact on Politics, Business and Society

AI-generated disinformation isn’t an abstract technology problem — it directly intervenes in democratic processes, business decisions and interpersonal relationships.

Politics: When Facts Become Arbitrary

The political dimension is particularly concerning. In February 2026, MIT Technology Review reported that the White House shared a manipulated image of a protester in Minnesota and subsequently mocked those who questioned its authenticity. Simultaneously, the US Department of Homeland Security (DHS) uses AI tools from Google and Adobe to create content shared with the public. When governments themselves deploy synthetic media and blur the boundaries between authentic and generated content, it becomes virtually impossible for citizens to make informed democratic decisions.

Eric Horvitz, Microsoft’s Chief Scientific Officer, emphasized in his MIT Technology Review interview: “Governments have not been outside the sectors that have been behind various kinds of manipulative disinformation.”

In the US, the Trump administration also canceled grants for disinformation research: the DOGE initiative cut NSF grants that funded scientific study of disinformation. This means independent research into an exponentially growing problem is increasingly disappearing.

Business: Loss of Trust as a Business Risk

For businesses — especially SMBs — AI disinformation has direct economic consequences. Deepfake scams, where executives’ voices are cloned for fraudulent wire transfers, cause billions in damages annually. But even without direct fraud losses, the general erosion of trust in digital content undermines online marketing: when customers can no longer be sure whether a testimonial is genuine or a product video is authentic, conversion rates suffer — and so does revenue.

Relationships: The Personal Dimension

The impact on interpersonal relationships is equally severe, if less visible. Paul Vane, Information Commissioner of Jersey, put it this way in February 2026: “AI tools that can generate or alter images and video are advancing rapidly. When used responsibly they hold tremendous promise. But as recent reporting makes clear, they are already being used to harm real people.” Sixty regulatory authorities worldwide signed a joint statement on AI-generated imagery practices.

Where Do Tech Companies Stand? A Position Overview

Whether Big Tech is serious about fighting AI disinformation was answered by Verge journalist Jess Weatherbed on February 23, 2026, with a devastating analysis. Her central thesis: the platforms profit from AI-generated content — and therefore have no economic incentive to truly solve the problem.

X (Twitter) and Elon Musk: The Biggest Step Backward

X — formerly Twitter — was a founding member of C2PA (Coalition for Content Provenance and Authenticity), the most important industry standard for labeling synthetic content. After Elon Musk’s acquisition, the platform withdrew from the initiative. Andy Parsons, Senior Director of Content Authenticity at Adobe, confirmed to The Verge that X is “not involved with C2PA.”

The consequences are severe: Musk himself shared misleading deepfakes on his own platform, Grok — xAI’s in-house AI model — generated violent and sexualized deepfakes. The Verge stated that X has “no interest in protecting its 270 million daily users from AI fakery.”

Meta / Instagram: Between Lip Service and Business Interests

Meta joined C2PA in September 2024 and introduced “AI Info” labels on Instagram. The reality, however, is sobering: according to The Verge, the label is small, hard to find, inconsistently placed and sometimes completely absent on desktop. Meanwhile, Meta is building an Instagram alternative that, according to Weatherbed’s research, is “entirely AI slop” — a platform made entirely of AI-generated content.

Hany Farid, professor of digital forensics at UC Berkeley, summed up the dilemma: “If the Mark Zuckerbergs and the Elon Musks of the world think that putting ‘AI generated’ labels on something will reduce engagement, then of course they’re incentivized not to do it.”

Google and SynthID: The Most Ambitious Technical Approach

Google DeepMind is pursuing the most ambitious technical approach with SynthID: invisible digital watermarks embedded in AI-generated images, audio, text and video that survive modifications like cropping, filters or compression. A SynthID Detector portal enables verification, and integration with Gemini allows users to check content provenance. At the same time, Google is increasingly replacing original news headlines on YouTube with AI-generated summaries — a contradiction that undermines the credibility of its own transparency initiative.

OpenAI: C2PA Member with Caveats

OpenAI sits on C2PA’s Steering Committee and publicly commits to responsible deployment: “We cautiously and gradually release new AI systems—with substantial safeguards in place.” At the same time, the company openly acknowledges that C2PA metadata can “easily be removed either accidentally or intentionally.” With Sora, OpenAI offers a powerful AI video tool that generates high-quality synthetic videos for paying subscribers.

Anthropic: Safety as a Business Model

Anthropic, the company behind Claude, occupies a unique position. It doesn’t operate its own social media platform and explicitly refused Pentagon military use. In its published “Core Views on AI Safety,” the company stated: “We do not know how to train systems to robustly behave well.” Anthropic promises to “sound the alarm” if evidence shows that AI safety is an unsolvable problem.

Microsoft: Between Ambition and Inconsistency

Microsoft is a C2PA co-founder since 2021 and published a new “Blueprint” for content authentication in February 2026, evaluating 60 different combinations of provenance and watermark methods. Eric Horvitz, Microsoft’s Chief Scientific Officer, emphasized: “It’s not about making any decisions about what’s true and not true. It’s about coming up with labels that just tell folks where stuff came from.” Critics point out, however, that Microsoft doesn’t consistently implement its own recommendations in its products — and as a major investor in OpenAI, profits economically from AI content production.

C2PA and AI Labeling: Does It Actually Work?

The Coalition for Content Provenance and Authenticity (C2PA) is currently the most important industry standard for labeling synthetic content. Co-founded in 2021 by Adobe, Microsoft, Intel and the BBC, C2PA defines technical specifications that give digital content a type of digital certificate of origin — similar to an authenticity seal for images, videos and audio files.

The problem: C2PA operates on a voluntary basis. The Verge described the standard as a “glorified honor system” and emphasized that it was never designed as the ultimate solution against deepfakes. Andy Parsons of Adobe confirmed: “This is not a silver bullet. It does solve a whole class of problems.”

The numbers tell a clear story: an Indicator study cited by The Verge found that only 30 percent of tested AI-generated posts on platforms like Instagram, LinkedIn, Pinterest, TikTok and YouTube were correctly labeled as AI-generated. 70 percent remained unmarked. On X/Twitter, the rate is zero percent — there is simply no AI labeling whatsoever. Only Google’s SynthID achieves a detection rate of approximately 80 percent in internal tests — but only for content generated with Google’s own tools.

A study published in Nature further found that transparency warnings alone are insufficient. The researchers found “little empirical evidence to support the effectiveness of AI transparency.” Even when users know content is AI-generated, it still influences their opinion formation.

California’s AI Transparency Act takes effect in August 2026 — a law that makes AI labeling mandatory for the first time. Whether it can serve as a model for global regulation remains to be seen.

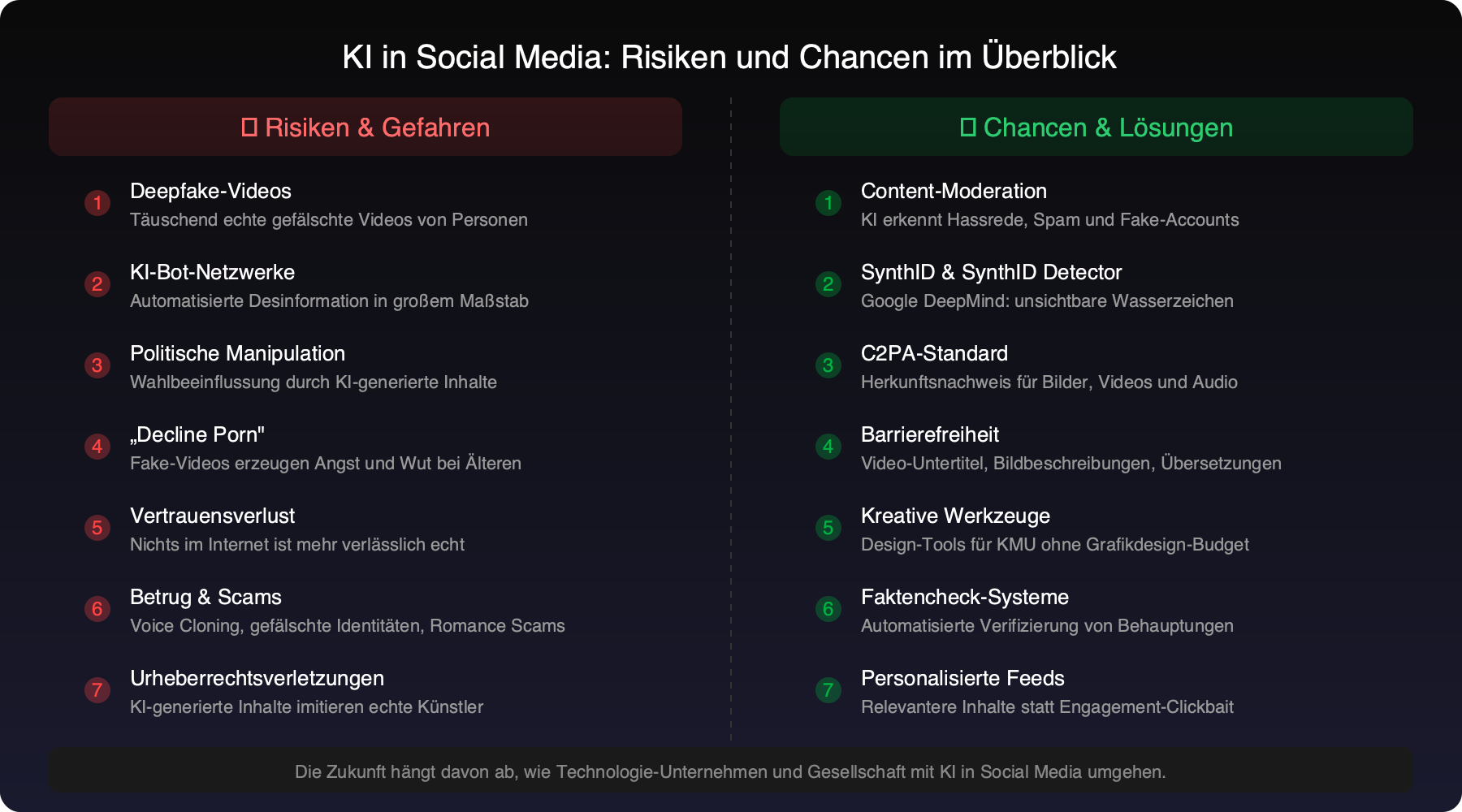

Risks at a Glance: What AI Is Doing to Social Media

The Other Side: What AI Does Right on Social Media

Despite all the justified concerns, it would be unfair — and factually incorrect — to portray AI on social media solely as a threat. The same technology that creates deepfakes also provides effective tools against disinformation and for creative, accessible use.

Real-Time Content Moderation

AI-powered moderation systems can detect hate speech, glorification of violence and spam faster and more consistently than human moderators alone. Meta already uses AI moderation for billions of posts per month and can identify problematic content often within seconds of upload. For SMBs running social media channels, this means less risk of their brand appearing alongside harmful content.

SynthID and Digital Watermarks

Google’s SynthID isn’t a perfect system, but it points the right direction: invisible, robust watermarks that give content a verifiable digital provenance. Combined with advances in C2PA and the upcoming California AI Transparency Act, an ecosystem could emerge where users can check with a single click whether content was created by humans or machines.

Accessibility and Inclusion

AI-generated subtitles, automatic image descriptions and real-time translations make social media content more accessible to people with visual or hearing impairments than ever before. Instagram and TikTok offer AI-powered automatic captions that represent a significant gain in participation, particularly for deaf and hard-of-hearing users.

Creative Tools for Businesses

AI-powered tools enable even small businesses to create professional-looking social media content — from automatically generated text variations to intelligent image editing to personalized feed algorithms that deliver relevant content to the right audience. The democratization of creative tools is one of the positive sides of the AI revolution.

AI-Powered Fact-Checking

Tools like Reality Defender use machine learning to automatically detect deepfakes — regardless of whether the content carries a C2PA label or not. This approach of inference-based detection is an important corrective to the voluntary labeling system and can identify content deliberately distributed without labels. For businesses, such tools offer the ability to verify content authenticity before basing business decisions on it.

What Can You Do? Recommendations for Businesses and Individuals

For Businesses and SMBs

- Employee training on deepfake detection: Raise your team’s awareness of AI-generated content. Training on “voice cloning” and “CEO fraud” can prevent significant financial damage.

- Implement verification processes: Before responding to social media content in a business context, verify the source. Tools like SynthID Detector and Reality Defender can help.

- Label your own content: If you use AI tools for content creation, disclose this transparently. Authenticity is becoming a competitive advantage.

- Update your cybersecurity strategy: Voice-cloning-based fraud and deepfake phishing require new security protocols — especially for wire transfer approvals and identity verification.

- Social media monitoring: Monitor whether your company name or brand is being misused in AI-generated content.

For Individuals

- Make source verification a habit: Before sharing an emotional post, ask: Who published this? Are there independent confirmations?

- Use AI detection tools: Google’s SynthID Detector and similar tools can help identify synthetic content.

- Strengthen media literacy: Talk to older family members about deepfakes. Show examples — not to create fear, but to foster healthy skepticism toward content that looks too perfect.

- Break algorithmic bubbles: Deliberately follow sources that offer different perspectives. AI-driven feeds amplify existing beliefs.

- Not everything is fake: Just as important as healthy skepticism is not falling into complete cynicism. Serious journalism and genuine content still exist — they just need more attention.

Conclusion: Truth Becomes a Team Effort

AI-generated disinformation on social media isn’t a future scenario — it’s the present. Deepfakes, bot networks and synthetic content already influence how millions of people perceive the world, make political decisions and trust each other. The tech companies — from Meta to Google to X — profit from the attention economy that AI content fuels, and implement their own transparency measures only half-heartedly.

At the same time, initiatives like SynthID, C2PA and California’s AI Transparency Act demonstrate that countermeasures are possible. The fight against AI disinformation won’t be won by a single technology — but through the interplay of technical standards, legal regulation, independent research and individual media literacy.

For SMBs, this means: cybersecurity doesn’t end at the firewall. A company that doesn’t prepare its employees for AI disinformation is just as vulnerable as one without antivirus protection. The good news is that businesses can act proactively with the right training and processes. Hany Farid of UC Berkeley summed it up pragmatically: “I don’t think it solves the problem, but I think it takes a nice big chunk out of it.”

Frequently Asked Questions (FAQ)

How can I spot a deepfake?

Look for unnatural lip movements, inconsistent lighting, blurry hands and missing ambient sounds. Use tools like Google’s SynthID Detector or Reality Defender for technical verification. When in doubt: search for independent sources confirming the same incident.

Are older people particularly vulnerable?

Studies and reports — such as the BBC’s investigation into “decline porn” — show that people who didn’t grow up with AI-generated content find it harder to identify. This doesn’t mean younger generations are immune — but the deliberate targeting of older demographics by disinformation creators is a documented phenomenon.

What is C2PA and does it protect me?

C2PA (Coalition for Content Provenance and Authenticity) is an industry standard that gives digital content a certificate of origin. It provides an important foundation but isn’t complete protection: participation is voluntary, labels can be removed, and not all platforms support it. X/Twitter doesn’t participate. Starting August 2026, California will become the first US state to require AI labeling by law.

What can businesses do against deepfake fraud?

Implement two-factor verification for all critical business decisions — especially wire transfers. Train employees regularly on voice cloning and phishing attacks. Use technical deepfake detection tools and establish clear escalation processes for suspicious communications. Professional cybersecurity consulting can help set up these processes.

Protect Your Business from AI Fraud and Disinformation

We help small and medium-sized businesses in Cologne and the surrounding area navigate the risks of AI disinformation with cybersecurity measures, AI strategy and employee training. Transparent, professionally grounded and without empty promises.